The euro crisis has been with us for more than a few years now, but there are still some fundamental questions left to be answered before there can be stability in the eurozone. In case you haven’t been following the news out of Europe, here’s some brief background on the situation: After the introduction of the euro in 2002, eurozone member countries enjoyed historically low interest rates on their government bonds. Investors were willing to loan their money to these countries at such low rates because they believed there was an implicit guarantee behind every government bond denominated in euros. That is, they believed German bonds carried equivalent risk to Greek bonds because they were both members of the eurozone and therefore had similar default risks. Once the global recession hit in 2008, many countries in the periphery of the eurozone started to run substantial budget deficits (and revealed that past deficits were larger than previously reported), which quickly disabused investors of their one-size-fits-all approach to risk valuation. That lead to the increasing interest rates shown in the graph above. The rapid rise in interest rates subsequently made it more expensive for these governments to service their debt, adding to their already ballooning deficits. Larger deficits spurred higher interest rates, and the vicious cycle continued until the European Central Bank (ECB) took decisive action to put a ceiling on certain countries’ interest rates, temporarily halting the crisis.

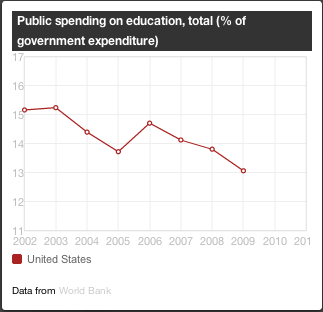

Before the crisis can be permanently put to rest, Germany needs to decide how much austerity (i.e. spending cuts and tax increases) and reform it ultimately wants from the periphery countries, Greece, Italy, Portugal, Spain, and Ireland. In an ideal world for Germany, these heavily indebted countries would slash their national budgets quickly and severely to repent for their previous fiscal sins. Unfortunately for the Germans, and for the other strong eurozone countries, like Finland and the Netherlands, the first steps toward austerity in the periphery have been a drag on those countries’ GDP growth, further deteriorating their debt-to-GDP ratios and making already hesitant international investors more skeptical of their ability pay off their debts. Furthermore, German fears about hyperinflation, which they experienced during the interwar period of the 1920’s, have prevented the ECB from pursuing looser monetary policy. If the ECB were to announce that it would tolerate inflation closer to 4 or 5 percent, rather than 2 percent (see graph below), then periphery countries could inflate some of their debt away and, more importantly, real wages would fall more quickly, bringing them closer to their market clearing level.

As of November 2012, unemployment in the eurozone was 11.6%, while Spain and Greece had 26% and 25% unemployment, respectively. With such a large percentage of their labor forces sitting idle, these countries are failing to achieve their potential economic output, which only exacerbates their debt problems.

Germany is determined to make the budgets of the periphery countries as austere as possible to atone for their past profligacy. This seems somewhat reasonable, given that those countries committed to running small deficits as a condition of joining the eurozone. But if fiscal consolidation is a rational demand to make of these countries, that only leaves looser monetary policy as a way to prevent a breakup of the eurozone. The important question is this: why should Germany, especially given its intimate history with hyperinflation, tolerate a rise in the inflation rate? Put simply, it must do so because it has benefited the most from eurozone-wide low and stable inflation. Prior to the creation of the eurozone, countries like Italy, Greece, and Spain would devalue their currencies to make their exports more competitive in the global marketplace. German workers are notoriously more productive than their counterparts in Southern Europe, so currency devaluation served as a convenient way for those countries to level the playing field in the export market. Now that there is a single currency, that option is off the table.

The New York Times recently ran an op-ed by Gunnar Beck that made the argument that Germany did not benefit the most from the creation of the eurozone. His main data regarding the import/export market, which we would expect to be most affected by the move to a single currency, was this:

“German exports rose most — by 154 percent — to the rest of the world; by 116 percent to non-euro E.U. members; and least of all, 89 percent, to other euro zone members. In 1998 the euro zone still accounted for 45 percent of all German exports; in 2011 that share had declined to 39 percent.”

Though this information may appear to imply that Germany does not in fact owe the other countries in the eurozone anything, it neglects to account for the most significant global economic trend of the past twenty years: the rapid and sustained growth of the emerging economies (e.g. Brazil, Russia, India, China, etc.). Those countries, and many others included in the “rest of the world” category, have experienced years of double-digit growth in the past couple decades. When you measure growth in German exports to countries using 1998 as the base year, of course the increase in exports to the rest of the world would be larger than the increase in exports to other eurozone countries — the rest of the world was growing extremely fast!

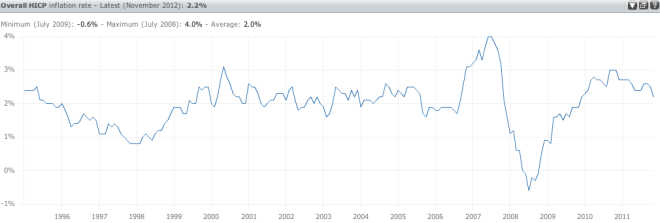

The data put forth by Mr. Beck obfuscates the real and significant benefit Germany receives from the euro: control of a significant number of its trading partners’ inflation rates. Countries like Greece can no longer run double-digit rates of inflation to make their exports more competitive relative to Germany’s. To give you an idea of how dramatic of a paradigm shift this is, look at the chart below of Greece’s inflation rate history:

Prior to its adoption of the euro, Greece depended heavily on currency depreciation to offset the low productivity of its labor force and to maintain the competitiveness of its exports on world markets. Now Greece, and every other country struggling with burdensome debt levels, is committed to 2% inflation forever because Germans neither need nor want the price level to rise more rapidly. But if Germany wants to safeguard the progress made toward European unification, it needs to let the ECB pursue a higher inflation target, possibly in conjunction with an unemployment target similar to the one adopted by the Federal Reserve this month. Germany owes the eurozone at least that much.